For years, page speed in SEO felt like a sliding scale. A slower site might lose a few positions, see slightly lower crawl frequency, or convert a bit worse. It mattered, but you could often get away with it.

AI-driven search changes the shape of the problem. In many AI answer experiences, the question isn’t “Did you rank #3 or #8?” It’s “Did you get used as a source at all?” Once the answer is generated, anything not pulled into that answer is effectively absent for that query. That’s why several GEO/AEO practitioners describe AI visibility as binary: you’re either extractable and selected, or you’re invisible for that moment.

A big reason is timing. Classic Google Search is largely asynchronous: Google can crawl and process your page now, then show you later, after it has had time to index, evaluate, and rank. Many AI answer systems work in a more synchronous mode when they use live retrieval: the user asks a question, and the system fetches supporting sources in real time during the user session. That means the system has a practical time budget for how long it can spend fetching pages before it needs to start writing. Slow sources don’t just “rank lower”; they often don’t make it into the set of sources the model can read at all. (Single Grain on page speed and LLM content selection)

This is where Time to First Byte (TTFB) becomes an early filter. TTFB is the time it takes for your server to start responding after a request is made. If that first response is late, you can fail the inclusion test before the system even sees your content.

That’s the lens for this article: not “speed as a ranking factor,” but “speed as availability.” And it’s also why BeSeenBy.ai focuses on diagnosing technical blockers that prevent retrieval and extraction—so you can see when TTFB is pushing you out of consideration, and which URLs are most at risk.

How AI Answers Are Assembled

When an AI “answers with sources,” it’s usually doing some version of Retrieval‑Augmented Generation (RAG). In plain terms: before the model writes its response, it first goes out and fetches supporting information from external sources (web pages, indexes, databases), then uses that retrieved text as the grounding context for what it generates. (AWS’s RAG overview)

A simplified RAG workflow looks like this:

- The user asks a question.

- The AI runs a retrieval step: it queries multiple sources in parallel (think “mini search + fetch”).

- The AI selects the best chunks it managed to retrieve in time.

- The model generates the final answer using those chunks as evidence.

The key detail for performance is the time budget. LLMs are expensive to run and users expect chat to feel responsive, so real-time retrieval can’t wait indefinitely for slow servers. If a page doesn’t start responding quickly, the system often drops it and moves on with whatever it already has, even if your content is stronger.

You can sometimes see this behavior in your own logs. A common pattern is a request that gets abandoned by the client before your server returns a full response. In NGINX environments this often shows up as HTTP 499 (Client Closed Request), meaning the client terminated the connection as the server was still processing.

This is why the first byte matters so much in AI retrieval: if the bot gives up before it receives anything usable, your page never even reaches the content evaluation stage.

In live retrieval, sources that don’t respond fast enough get dropped before the model reads them.

Why TTFB Matters Early

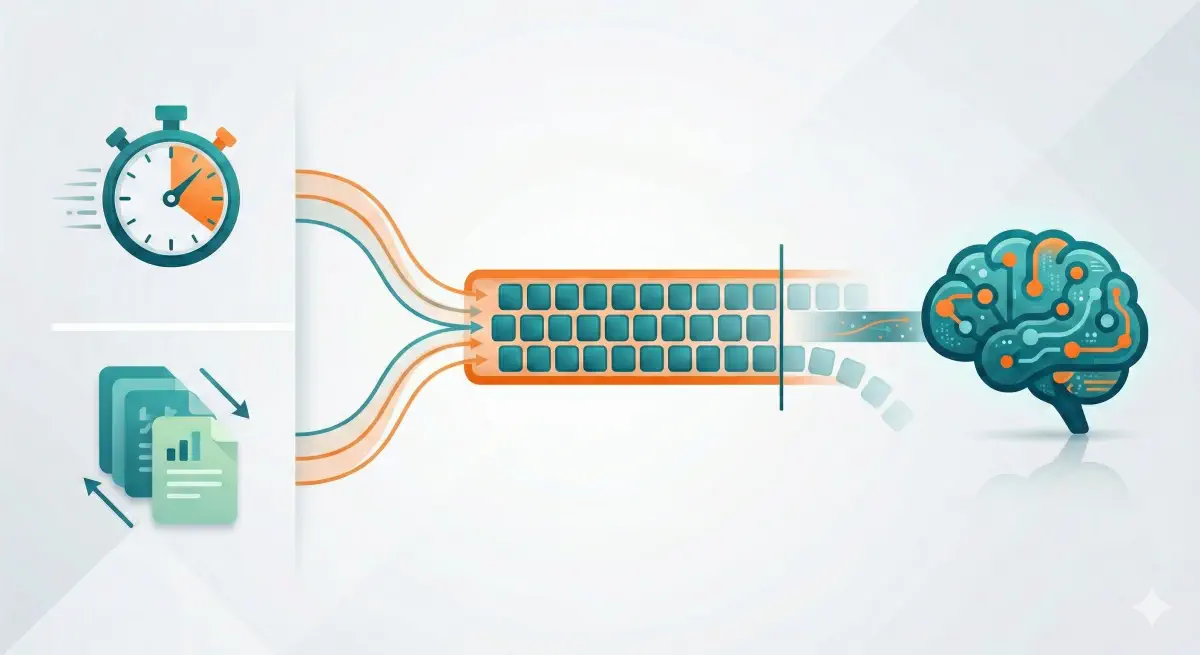

When an AI system answers with sources, it’s working under a grounding budget. That budget has two constraints.

First, a latency budget: the system can’t wait indefinitely for slow pages because the user is waiting for the answer. In live retrieval, the system will often fetch several candidate pages in parallel and proceed with whichever sources return usable content fast enough.

Second, a context (token) budget: retrieved text has to fit into the model’s context along with the user’s question, and it still needs space left for the model’s response. Microsoft’s grounding guidance makes this selection constraint clear: the context window is limited, so you must choose what to include and leave room for the response.

Dan Petrovic’s research on Google’s grounding chunks makes the context side concrete for Google’s Gemini grounding: grounding tends to plateau around ~540 words (~3,500 characters), and pages above ~2,000 words see diminishing returns in how much of the page is actually selected.

Put those together and you get a race condition:

- The AI pings many candidate pages.

- It can only ground on a limited amount of text from a limited number of sources.

- If your page is late to start responding, it may be excluded before your content is even considered.

That’s why TTFB is the gatekeeper. It’s the earliest point where you can lose the latency budget. If the first byte arrives too late, the system may drop the request before it receives any usable text to ground on.

Practical benchmarks you can use (not “LLM rules,” just good operational targets):

- Strong (<600 ms): Lighthouse’s server response time audit uses 600 ms as its threshold.

- Solid (≤0.8 s): Common “good” guidance in Shopify’s TTFB guidance.

- High risk (>1.8 s): Commonly labelled “poor” in the same guidance.

This is the logic behind treating TTFB as a gating metric in BeSeenBy.ai: miss the grounding window and you don’t get “ranked lower” inside the answer—you often don’t make it into the grounded evidence set at all.

TTFB is the earliest point where a slow server can cause a source to be dropped entirely.

When Fast Is Not Enough: Empty Shells and Broken Structure

A low TTFB can win you the retrieval race, but it doesn’t guarantee the AI can actually read anything useful once it arrives. There are two common failure modes: you return a fast-but-empty HTML shell, or your page structure is so unstable that extraction gets messy.

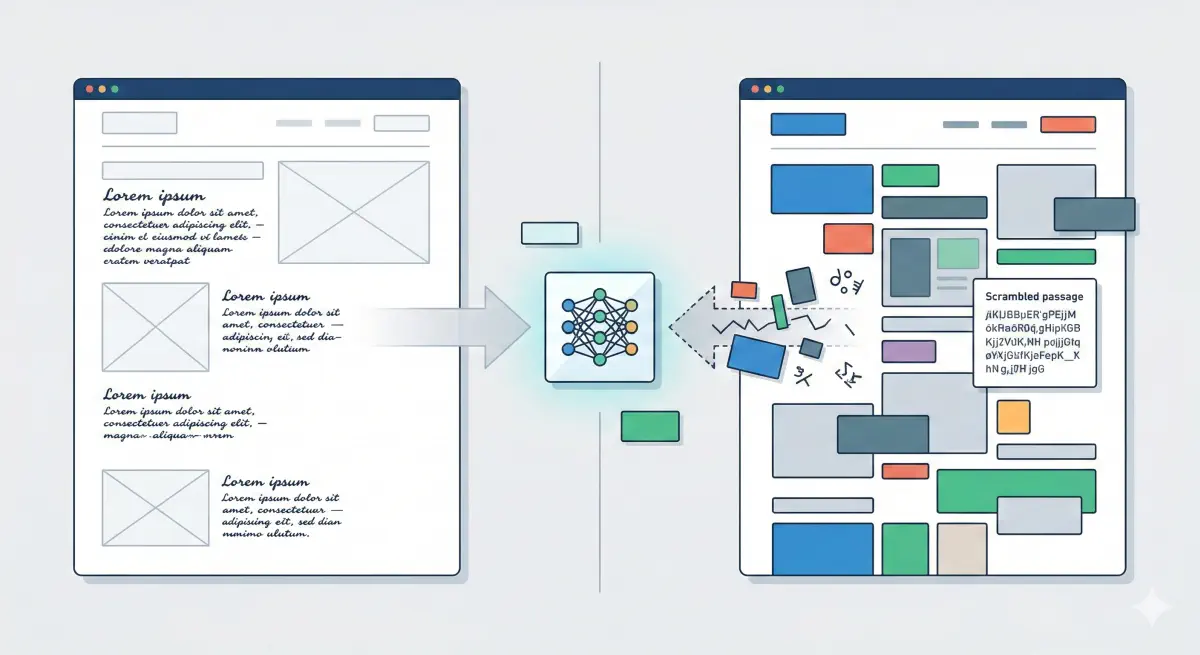

The Empty Shell Trap: Fast HTML, Zero Text

Many modern sites ship a minimal HTML document and rely on client-side JavaScript to fetch and inject the real content after load. Humans see the final rendered page. A lot of AI crawlers never do.

Vercel’s crawler research found that major AI crawlers do not render JavaScript in most cases, which means they only see the initial HTML response. If your real text arrives via JS, those bots effectively see a blank page.

That creates a metric trap: your TTFB can look excellent because you’re quickly returning the shell, but your “time to text” for bots is infinite because the text never arrives in the HTML they fetch.

What this looks like in practice:

- The HTML response contains mostly

<script>tags and empty container<div>s. - Key paragraphs, product details, tables, and even nav links are missing from the raw HTML.

- The visible page for users is “built” after JS runs, which the crawler skips.

Fixes that work without rebuilding your site:

- Server-side rendering (SSR) for key templates (homepage, category pages, key articles).

- Prerendering for bots: serve a pre-rendered HTML snapshot to crawlers that don’t run JS. (Prerender’s guide on optimizing for AI crawlers)

- Content-first baseline: ship a content-first HTML baseline, then hydrate interactivity after initial render.

A fast TTFB means nothing if the HTML contains no extractable content.

The Structure Trap: CLS as Extraction Instability

CLS (Cumulative Layout Shift) is usually framed as a user experience metric. Bots don’t “watch” the screen shift. The deeper issue is what causes CLS: late-loading elements that insert themselves into the DOM (ads, embeds, personalization blocks, deferred components). That often changes the reading order and the structure of the page.

Retrieval systems typically chunk pages into segments before they embed them and store them for later matching (vector retrieval). If your page’s structure is volatile—especially above the fold—the chunk boundaries and “what text belongs together” can become inconsistent across fetches and rendering paths. That increases the chance the retriever pulls the wrong chunk or loses the key explanation inside a noisy block. (Agenxus’s GEO guide)

Fixes that usually move CLS and extraction quality in the same direction:

- Reserve space for images/ads/embeds (explicit dimensions, placeholders).

- Avoid injecting new blocks above existing content after load; load them below, or keep a stable placeholder.

- Reduce third-party scripts that mutate the DOM late, or isolate them to non-critical areas.

INP as Agentic Friction

INP (Interaction to Next Paint) measures responsiveness to user interactions. For pure reading crawlers, INP is less relevant. It becomes relevant when the AI is acting like an agent: clicking, filtering, opening modals, stepping through checkout, filling forms.

Agent-style tool use is an active area of investment across platforms, and the direction is clear: more “do” workflows, not just “read and summarize.” (Anthropic’s engineering post on advanced tool use) If your site is heavy with long tasks and input delay, even successful retrieval can turn into failed completion (the agent times out, retries, or switches to a competitor).

Fixes that help:

- Reduce main-thread JS work on key flows (forms, filters, checkout).

- Split large bundles, delay non-critical scripts, and audit third-party tags.

- Test your critical flows on mid-tier mobile CPU/network, not your laptop.

In BeSeenBy.ai terms, this is why “AI visibility” can fail even when TTFB is good: AI systems need (1) readable content in the initial HTML, (2) stable structure that chunks cleanly, and (3) responsive flows when an agent needs to take action—not just cite a paragraph.

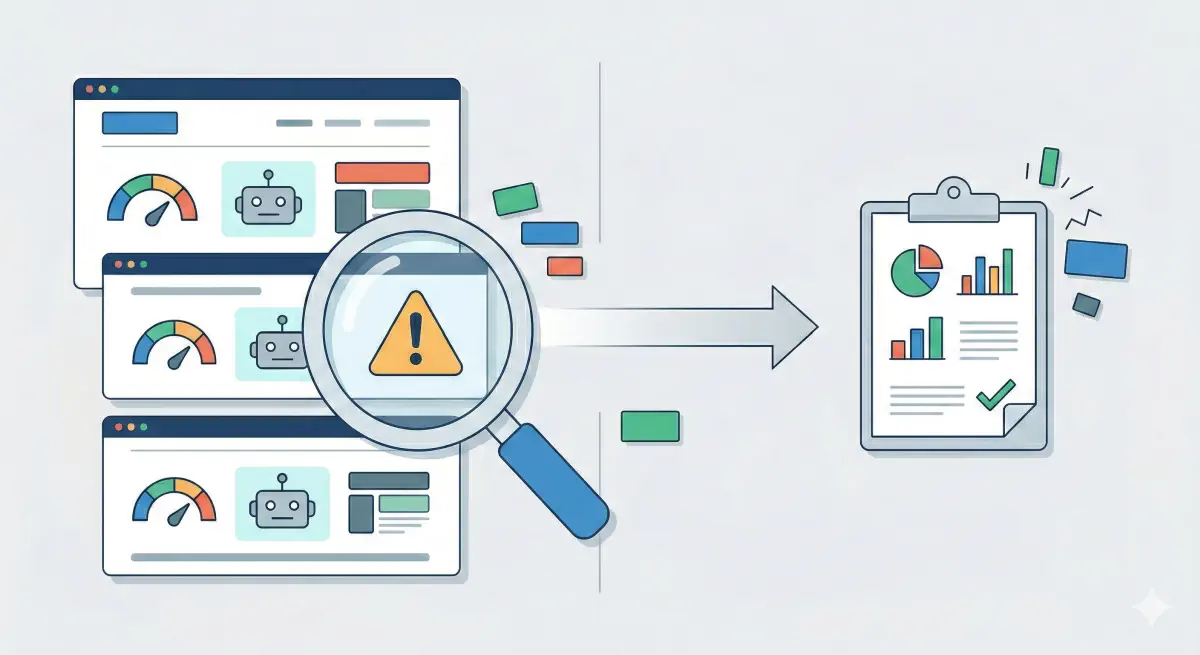

Diagnose Your AI Visibility Risk

Most teams are attacking “AI visibility” from the content side: rewriting pages to answer prompts better, adding more schema, publishing FAQ blocks, tracking prompt performance, monitoring mentions, and iterating on topical coverage. Those tactics can help once you’re in the game.

The problem is that a lot of sites aren’t eligible yet.

If an AI system can’t reliably fetch your page, or it fetches a fast-but-empty HTML shell, or it times out before the first byte, your content work doesn’t get a chance to matter. You’re not “ranking lower” inside AI answers—you’re failing the inclusion step that happens before the model even reads your words. That’s why BeSeenBy.ai is positioned as a technical eligibility check: it focuses on access and extraction prerequisites before you invest in higher-level GEO/AEO work.

Here’s a diagnostic workflow that keeps you out of guesswork.

Step 1: Check eligibility (are you even readable?)

Run a scan on your core URLs (homepage, top category/service pages, top 3–5 articles). You’re looking for basic prerequisites: allowed crawl policy, successful HTTP access, and extractable content in the initial response.

Step 2: Check the “race” metrics (TTFB risk zones)

Open the performance section and look at TTFB for each URL. Treat it like a traffic light:

- <200 ms: you’re competing well in retrieval races

- ~600–800 ms: workable, but you can lose to faster competitors

- 1200 ms+: fragile; small slowdowns (load, geography, security checks) can push you into exclusion

Step 3: Check for “false positives” (fast server, invisible content)

If TTFB looks decent but extractability is low, do one manual test any marketer can do:

Right-click → “View page source” (or use view-source: in Chrome). Ask: “Is the main text actually present in the HTML?”

If you mostly see scripts and empty containers, you likely have the empty shell problem: humans see content after JS runs, but bots that don’t execute JS see almost nothing. That’s when SSR/prerendering on key templates becomes higher priority than more content tweaks.

Infrastructure work is expensive and political (hosting, caching, WAF/CDN tuning). You don’t want to do it blindly. A focused eligibility audit lets you pinpoint which URLs fail, how they fail, and what to fix first—so your later investments in content, schema, and prompt strategy have a surface area the models can actually reach.

Run eligibility checks first before investing in content strategy and schema optimization.

Infrastructure Fixes That Actually Move the Needle

If you’re consistently over ~600–800 ms TTFB on key URLs, content tweaks won’t save you. You need infrastructure changes that reduce first byte latency for bots and humans alike.

Edge Caching With Serve-Stale Revalidation

The simplest way to win the retrieval race is to stop making every request wait for your origin server.

A common pattern is “serve stale during revalidation”: caches can return a cached response immediately, even after it has technically expired, and revalidate in the background after serving the cached copy. The behavior is defined in RFC 5861, the HTTP caching extension spec.

Why it matters for AI retrieval: it compresses TTFB from “origin speed” to “edge speed.” If the edge can return something in tens of milliseconds, you stay inside the latency budget, and the AI system gets text early enough to consider you.

Edge caching is the highest-ROI TTFB fix: it replaces origin latency with edge latency.

Geography Still Matters

Even with a well-optimized server, distance adds round-trip time. If your origin is far from where the requester runs, TTFB climbs before your application code even starts doing work.

You don’t need to know exactly where every AI system is hosted to act on this: serve key pages from the edge. A CDN with good cache hit rates removes most of the transcontinental latency because the first byte comes from a nearby point of presence, not from your origin.

If you can’t cache everything, cache the things that are most likely to be retrieved and cited: homepage, category/service pages, and the pages that answer “definition” and “how-to” intents.

WAF and Soft Blocks

A common failure mode is accidental throttling. AI crawlers are often bursty (lots of URLs requested in a short window), which can trip bot protections and rate limits.

This matters because a “soft block” doesn’t always look like a clean 403. It can show up as increased challenge pages, delayed responses (TTFB spikes), intermittent timeouts, or inconsistent access by IP/ASN.

Some AI platforms publish explicit WAF guidance and IP lists for allowlisting. Perplexity’s crawler documentation includes WAF configuration guidance and recommends allowlisting their bots when a WAF is in front of the site.

The practical approach:

- If you want AI bots, treat them like important partners: identify the bots you care about, then create explicit allow rules (by verified IP ranges where provided, not just User-Agent strings).

- Monitor for bot-triggered latency spikes and timeouts, not just blocks. A site can be “allowed” but still too slow to be usable.

A site can be technically “allowed” but effectively blocked by WAF rate limits and soft throttling.

Speed Determines Whether You Get Considered

A lot of “AI visibility” work happens at the content layer: better answers, clearer entities, more structured sections, richer schema, prompt tracking. That’s fine, but it assumes something that often isn’t true yet: that the AI can actually fetch and extract your content inside its grounding budget.

Grounding has two constraints. There’s a latency budget (the system can’t wait long during live retrieval) and there’s a context budget (only a limited slice of text can be used as evidence). Microsoft’s grounding guidance describes the practical constraint of fitting grounded content into the context window. Dan Petrovic’s research on Google’s grounding chunks shows the context side clearly: grounding plateaus around ~540 words / ~3,500 characters, and longer pages don’t mean proportionally more grounded text.

That’s why TTFB is such a strong eligibility signal. It’s the earliest point where you can miss the latency window and get excluded before your content is even read. As an operational benchmark, Lighthouse flags server response times over 600 ms on the main document request.

So the right sequence is:

- Make sure your pages are reachable (robots/WAF), fast to respond (TTFB), and actually readable in the initial HTML (not a JS-only shell).

- Then invest in the higher-order work: content strategy, structure, schema, and measurement.

BeSeenBy.ai is designed to make step 1 explicit, measurable, and repeatable—so you can stop guessing whether you’re “visible in AI” and start fixing the concrete blockers that decide eligibility in the first place.