For years, “text-to-HTML ratio” has been treated as a pseudo-metric in SEO audits. In AI retrieval pipelines, the same underlying issue—low information density—can create real costs: more tokens, noisier chunks, and less room for the content that actually answers the question.

This article keeps two things separate: (1) classic Google ranking, where text-to-HTML ratio is not a meaningful metric, and (2) AI retrieval, where information density can affect efficiency and extraction quality. We’ll stay in the second lane, and be explicit about caveats any time we mention Markdown or llms.txt.

From “SEO Myth” to “AI Tax”

For years, “text-to-HTML ratio” has mostly been an SEO distraction. Google has repeatedly said it’s not a meaningful ranking metric—John Mueller has called the idea nonsense for SEO and advised people to ignore reports built around it.

AI retrieval systems change what “bloat” costs.

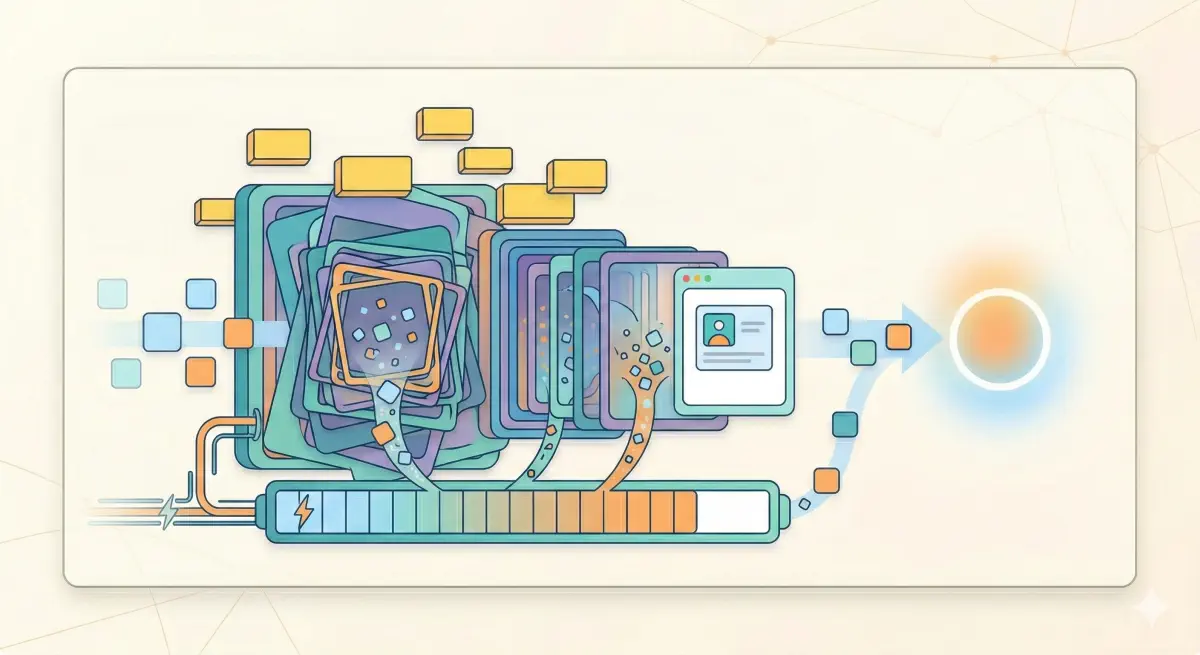

LLMs operate on token economics. They have finite context windows, and processing more tokens costs money and increases the odds the important parts get squeezed out. So every extra layer of wrapper code that survives extraction uses budget that could have gone to your actual content.

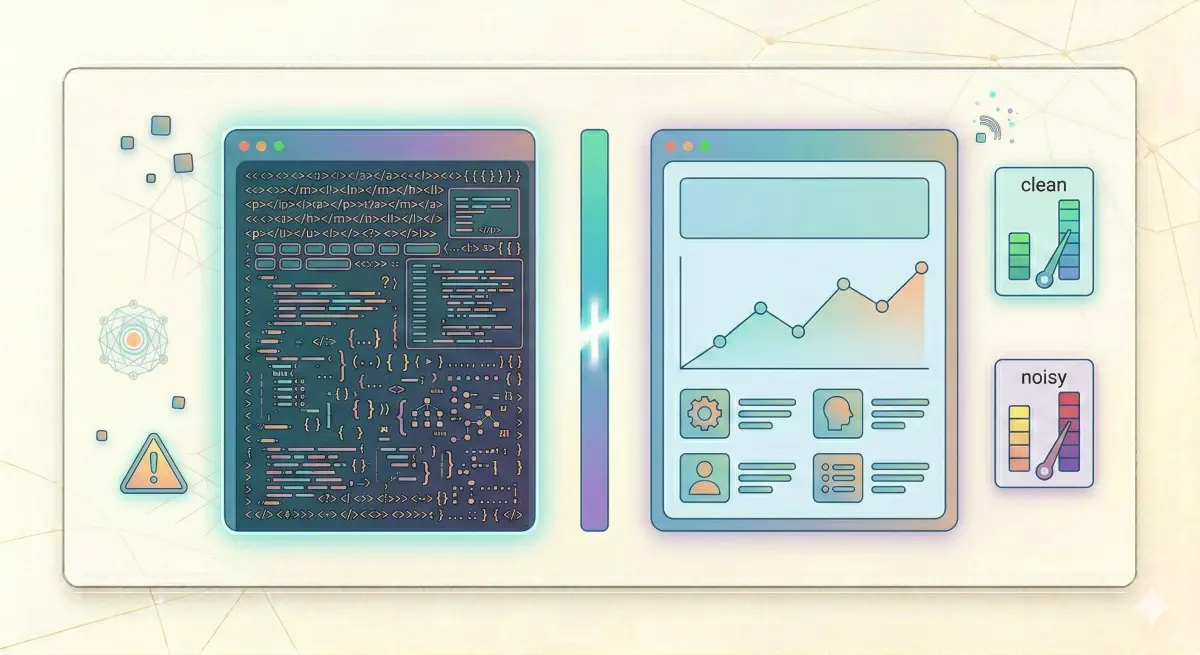

Cloudflare’s “Markdown for Agents” research makes the token gap concrete. In their example, the same page is dramatically smaller in tokens after HTML-to-Markdown conversion: about 16,180 tokens as HTML versus 3,150 as Markdown.

Thesis: a low text-to-HTML ratio may still be a “myth metric” for classic SEO, but it can become a real structural cost in AI systems. It can dilute signal-to-noise, pollute chunks in RAG pipelines, and make your content more expensive to fit into limited model budgets.

We’ll treat this as “plausible and observable,” not “officially confirmed,” because vendors don’t publish a single global rule for what gets included.

The same content in raw HTML can use five times as many tokens as a cleaner representation—a real cost in systems with limited context windows.

The Token Tax: Paying for the Packaging

If you feed raw HTML to an LLM, you’re paying (in tokens) for more than your message. You’re paying for the packaging: wrappers, classes, attributes, repeated navigation, footer boilerplate, and template fragments that often survive extraction.

Cloudflare’s “Markdown for Agents” post provides a concrete data point: their example page drops from 16,180 tokens in HTML to 3,150 tokens as Markdown—roughly an 80% reduction without changing the meaning.

That is the “AI tax” framing: you can have great content, but if the machine-readable representation is bloated, you compete at a disadvantage in any pipeline that must extract, chunk, retrieve, and fit passages into a limited context.

Cloudflare’s documentation shows this is becoming an explicit web pattern: clients can request text/markdown via the Accept header and receive an edge-converted Markdown representation.

Caveat: this is evidence that efficiency matters at scale. It is not proof that Markdown directly increases citations, and it does not imply a universal preference across all LLM products.

RAG and the Chunking Failure Mode

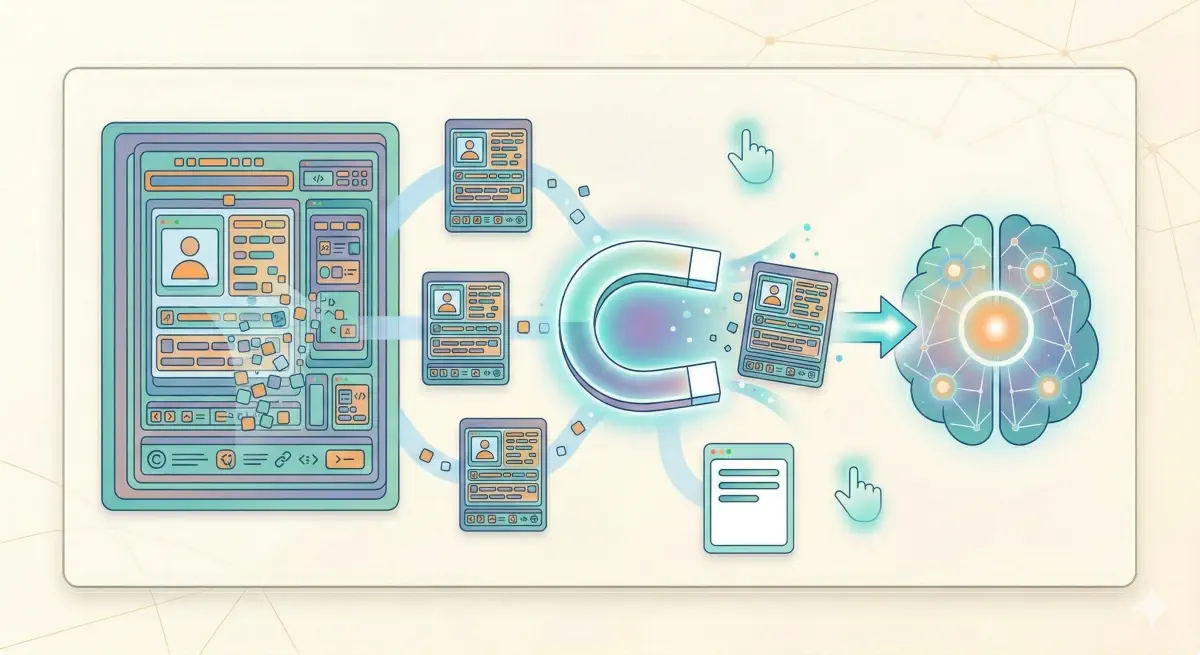

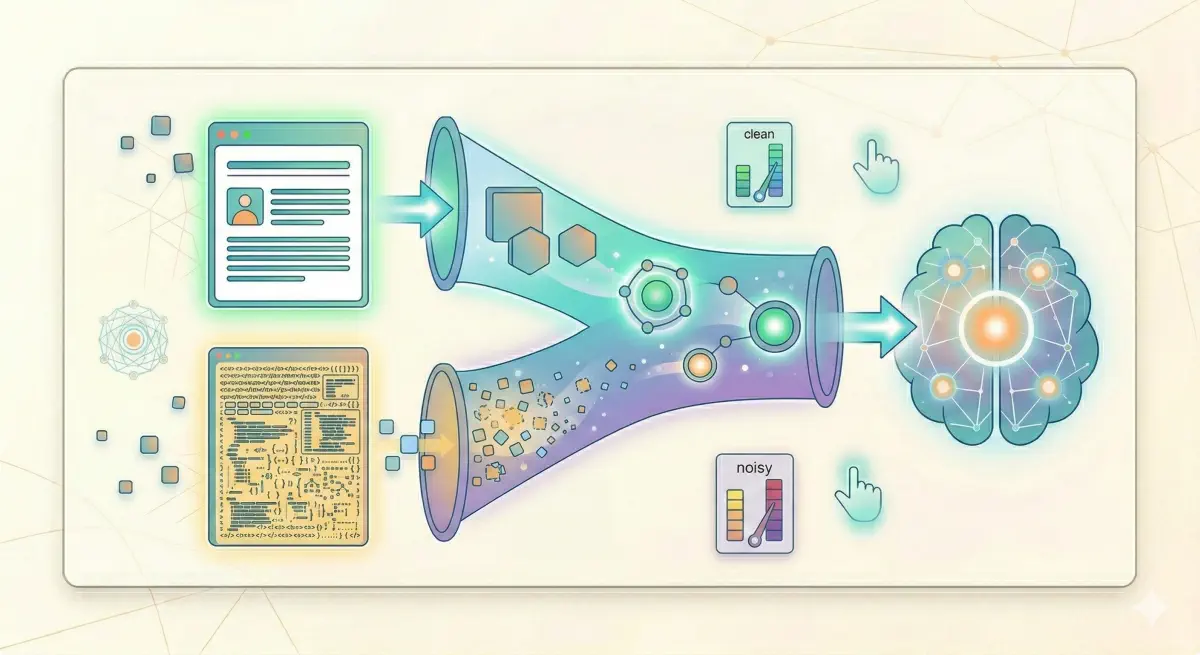

Most AI systems that answer with citations use a Retrieval-Augmented Generation (RAG) pipeline: extract content, split it into chunks, embed those chunks, then retrieve a small set that looks relevant to the question.

Chunking is the mechanism that decides what parts of your site are eligible to reach the model. Firecrawl’s RAG chunking guide describes the core tradeoff: smaller chunks match more precisely but lose context; larger chunks preserve relationships but dilute relevance.

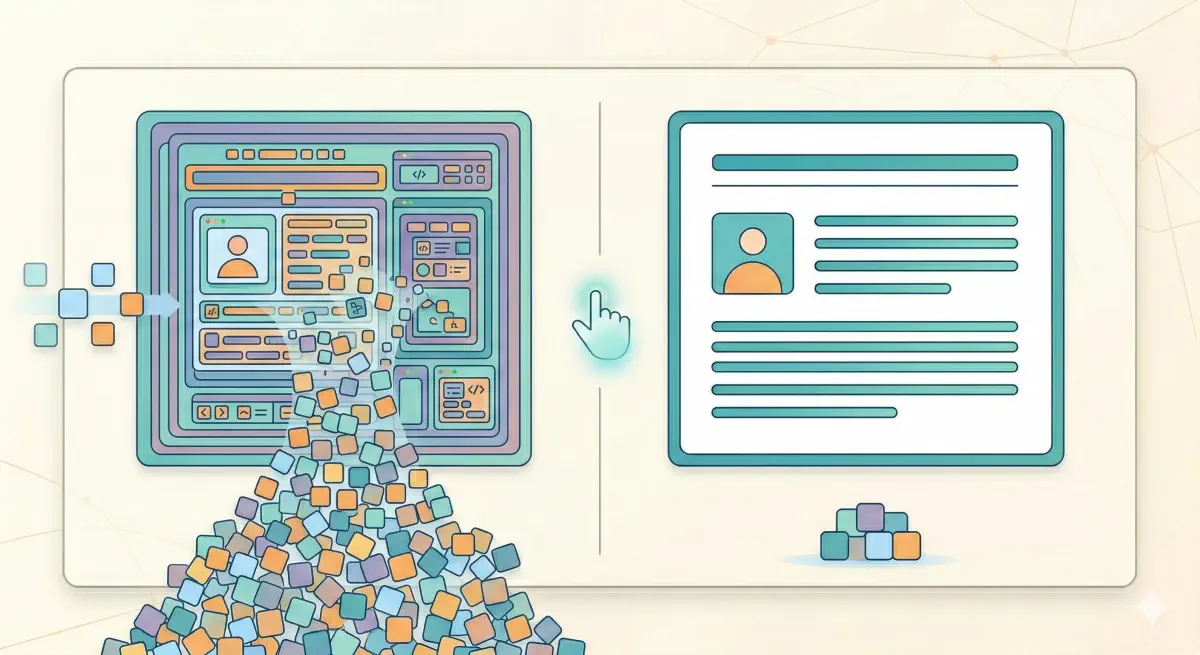

Now add low information density to that pipeline and you get a predictable failure mode: chunks get polluted.

What “polluted chunks” means:

- A chunk mixes the paragraph that answers the question with navigation, cookie UI strings, repeated CTAs, or template boilerplate.

- Embeddings reflect that mixed text, so the chunk’s meaning becomes vague instead of specific.

- The retriever pulls broad, low-precision chunks, and your real answer gets buried.

This is the same reason infrastructure vendors are investing in HTML-to-clean-text conversion. Cloudflare’s Markdown-for-Agents feature is an explicit attempt to reduce token waste and noise for “AI crawlers and agents.”

Boilerplate that survives extraction pollutes chunk embeddings and reduces how precisely they can be retrieved.

“Markdown for Agents” Is Content Negotiation, Not a Ranking Lever

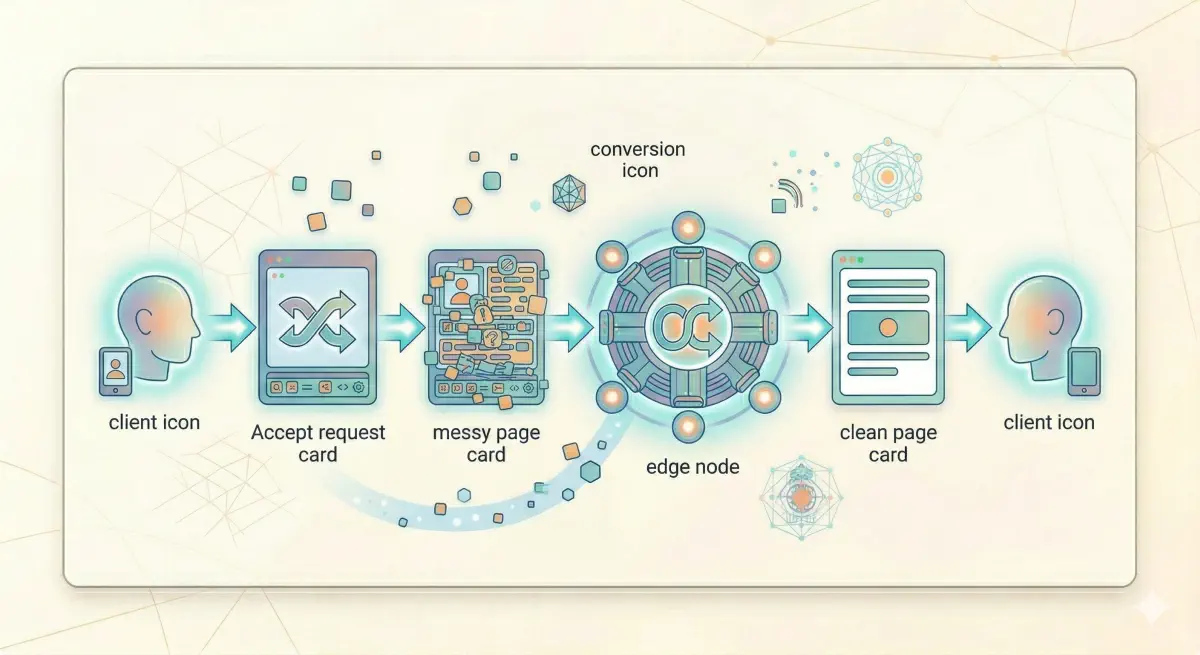

The shift is not “publish Markdown pages.” The shift is that the web is using standard HTTP content negotiation to serve a machine-friendly representation when the client asks for it.

Cloudflare’s implementation is straightforward: clients request text/markdown via Accept, and Cloudflare can fetch origin HTML and convert it to Markdown before serving it.

Why this matters:

- It productizes the token tax problem: HTML is often too expensive and noisy for agents at scale.

- It normalizes “AI content negotiation” as a web pattern.

Caveats (keep these explicit):

- Cloudflare’s feature is evidence that token/format efficiency matters, not proof that Markdown directly improves citations.

- Blog/vendor claims like “X% RAG accuracy lift” are implementation-specific unless tied to a reproducible benchmark.

“Markdown for Agents” is HTTP content negotiation, not a content format you have to maintain separately.

llms.txt: A High-Density Map

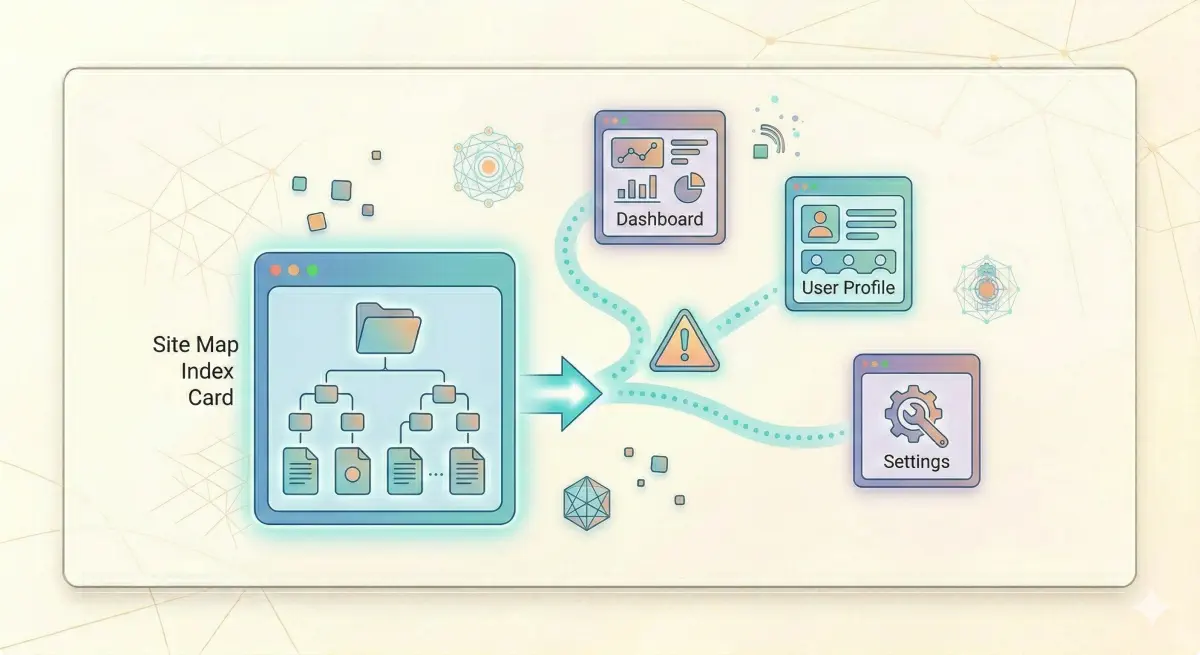

If Markdown-for-Agents is about serving a cleaner representation of a page, llms.txt is about giving machines a high-density index of what matters across the site: “start here, these are the key resources.”

A recent guide to llms.txt reports large adoption and frames it as a low-cost way to help machines discover canonical resources.

Caveats stay important:

llms.txtdoes not force any model or crawler to use it.- It does not override robots/WAF, JavaScript rendering gaps, or slow responses.

- Evidence of measurable impact is mixed; Search Engine Land’s tracking experiment reported inconsistent results and even noted Google briefly added then removed

llms.txtfrom parts of its docs.

The right posture: low effort, plausible upside for discovery and efficiency, zero guarantees.

Diagnose Your Noise Level

You don’t need a “ratio score” to act on this. What you need is a practical way to answer two questions:

- Is the content you care about present in the raw HTML that crawlers fetch?

- Is the extracted representation “clean enough” that chunks are mostly content, not template noise?

BeSeenBy.ai is designed for this eligibility layer. Its Content Visibility diagnostic compares JavaScript-rendered content against raw HTML and reports a word count gap analysis, plus structural signals pulled from raw HTML.

A workflow that maps to this article’s thesis:

Step 1: Scan representative templates. Run audits for a blog post, a product/service page, a category/list page, and a “money flow” page (checkout/booking/lead form). Templates, not individual URLs, usually drive the noise.

Step 2: Look at the content visibility gap. If important text is missing from raw HTML, that is a harder failure than “ratio.” Many systems will never see the content at all. BeSeenBy.ai surfaces missing HTML content via JS vs HTML comparison and word count gap analysis.

Step 3: Look for structural clues of extraction clutter. BeSeenBy.ai also reports semantic heading hierarchy and ARIA label/role coverage counts from raw HTML. These don’t replace a full accessibility audit, but they help indicate whether the page exposes a clean, structured interface to machines.

Step 4: Prioritize fixes that increase information density without harming UX. High-ROI fixes often look like:

- Reduce repeated boilerplate in the main content region

- Move navigation/utility content out of the main article container

- Keep core answers early and clean (so even partial extraction captures the key point)

- Ship a machine-friendly representation when feasible (Markdown negotiation,

llms.txt)—with the caveats from earlier sections

Start with templates, not individual URLs. One fix usually resolves the issue across hundreds of pages.

A content gap (content in JS-rendered view but missing from raw HTML) is a harder problem than ratio—some systems never see that content at all.

Ignore the Ratio, Fix the Density

Text-to-HTML ratio is not a “ranking factor,” and Google’s public messaging has been clear that it’s not a metric to optimize for in classic SEO.

AI retrieval systems work within token and chunk limits. In that environment, low information density becomes a real cost. Cloudflare’s own example shows how dramatic the token difference can be between raw HTML and a cleaner representation.

The practical approach is simple:

- Make sure the content is present in raw HTML (not only after JavaScript runs).

- Reduce template noise in the main content stream so chunks stay specific.

- Use machine-friendly representations (

text/markdown,llms.txt) as optional efficiency layers, not as guaranteed visibility levers.

BeSeenBy.ai fits here as a diagnostic: it helps you check whether your content is visible to crawlers and whether the page structure points to cleaner extraction before you spend more time on downstream optimization.

The fix sequence: content in raw HTML first, noise reduction second, machine-friendly representations as optional additions.

The metric to ignore is the ratio. The metric to act on is information density and extraction quality.